Download Microsoft.AI-900.NewDumps.2021-04-11.45q.tqb

| Vendor: | Microsoft |

| Exam Code: | AI-900 |

| Exam Name: | Microsoft Azure AI Fundamentals |

| Date: | Apr 11, 2021 |

| File Size: | 2 MB |

Demo Questions

Question 1

For a machine learning progress, how should you split data for training and evaluation?

- Use features for training and labels for evaluation.

- Randomly split the data into rows for training and rows for evaluation.

- Use labels for training and features for evaluation.

- Randomly split the data into columns for training and columns for evaluation.

Correct answer: B

Explanation:

Reference:https://www.sqlshack.com/prediction-in-azure-machine-learning/ Reference:

https://www.sqlshack.com/prediction-in-azure-machine-learning/

Question 2

You are developing a model to predict events by using classification.

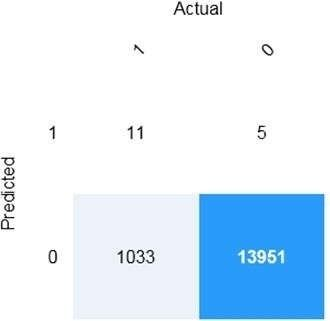

You have a confusion matrix for the model scored on test data as shown in the following exhibit.

Use the drop-down menus to select the answer choice that completes each statement based on the information presented in the graphic.

NOTE: Each correct selection is worth one point.

Correct answer: To work with this question, an Exam Simulator is required.

Explanation:

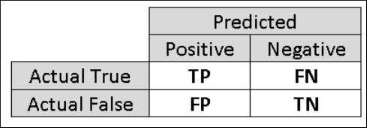

Box 1: 11 TP = True Positive. The class labels in the training set can take on only two possible values, which we usually refer to as positive or negative. The positive and negative instances that a classifier predicts correctly are called true positives (TP) and true negatives (TN), respectively. Similarly, the incorrectly classified instances are called false positives (FP) and false negatives (FN). Box 2: 1,033FN = False Negative Box 1: 11

TP = True Positive.

The class labels in the training set can take on only two possible values, which we usually refer to as positive or negative. The positive and negative instances that a classifier predicts correctly are called true positives (TP) and true negatives (TN), respectively. Similarly, the incorrectly classified instances are called false

positives (FP) and false negatives (FN).

Box 2: 1,033

FN = False Negative

Question 3

You build a machine learning model by using the automated machine learning user interface (UI).

You need to ensure that the model meets the Microsoft transparency principle for responsible AI.

What should you do?

- Set Validation type to Auto.

- Enable Explain best model.

- Set Primary metric to accuracy.

- Set Max concurrent iterations to 0.

Correct answer: B

Explanation:

Model Explain Ability. Most businesses run on trust and being able to open the ML "black box" helps build transparency and trust. In heavily regulated industries like healthcare and banking, it is critical to comply with regulations and best practices. One key aspect of this is understanding the relationship between input variables (features) and model output. Knowing both the magnitude and direction of the impact each feature (feature importance) has on the predicted value helps better understand and explain the model. With model explain ability, we enable you to understand feature importance as part of automated ML runs. Reference:https://azure.microsoft.com/en-us/blog/new-automated-machine-learning-capabilities-in-azure-machine-learning-service/ Model Explain Ability.

Most businesses run on trust and being able to open the ML "black box" helps build transparency and trust. In heavily regulated industries like healthcare and banking, it is critical to comply with regulations and best practices. One key aspect of this is understanding the relationship between input variables (features) and model output. Knowing both the magnitude and direction of the impact each feature (feature importance) has on the predicted value helps better understand and explain the model. With model explain ability, we enable you to understand feature importance as part of automated ML runs.

Reference:

https://azure.microsoft.com/en-us/blog/new-automated-machine-learning-capabilities-in-azure-machine-learning-service/